Lead2Pass: Latest Free Oracle 1Z0-060 Dumps (71-80) Download!

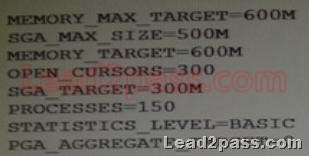

QUESTION 71

To enable the Database Smart Flash Cache, you configure the following parameters:

DB_FLASH_CACHE_FILE = `/dev/flash_device_1′ , `/dev/flash_device_2′

DB_FLASH_CACHE_SIZE=64G

What is the result when you start up the database instance?

A. It results in an error because these parameter settings are invalid.

B. One 64G flash cache file will be used.

C. Two 64G flash cache files will be used.

D. Two 32G flash cache files will be used.

Answer: B

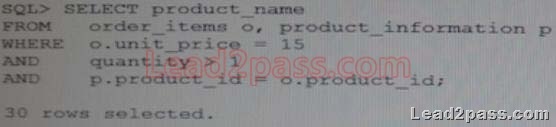

QUESTION 72

You executed this command to create a password file:

$ orapwd file = orapworcl entries = 10 ignorecase = N

Which two statements are true about the password file?

A. It will permit the use of uppercase passwords for database users who have been granted the SYSOPER role.

B. It contains username and passwords of database users who are members of the OSOPER operating

system group.

C. It contains usernames and passwords of database users who are members of the OSDBA operating

system group.

D. It will permit the use of lowercase passwords for database users who have granted the SYSDBA role.

E. It will not permit the use of mixed case passwords for the database users who have been granted the

SYSDBA role.

Answer: AD

Explanation:

*You can create a password file using the password file creation utility, ORAPWD.

* Adding Users to a Password File

When you grant SYSDBA or SYSOPER privileges to a user, that user’s name and privilege information are added to the password file. If the server does not have an EXCLUSIVE password file (that is, if the initialization parameter REMOTE_LOGIN_PASSWORDFILE is NONE or SHARED, or the password file is missing), Oracle Database issues an error if you attempt to grant these privileges.

A user’s name remains in the password file only as long as that user has at least one of these two privileges. If you revoke both of these privileges, Oracle Database removes the user from the password file.

*The syntax of the ORAPWD command is as follows:

ORAPWD FILE=filename [ENTRIES=numusers]

[FORCE={Y|N}] [IGNORECASE={Y|N}] [NOSYSDBA={Y|N}]

*IGNORECASE

If this argument is set to y, passwords are case-insensitive. That is, case is ignored when comparing the password that the user supplies during login with the password in the password file.

QUESTION 73

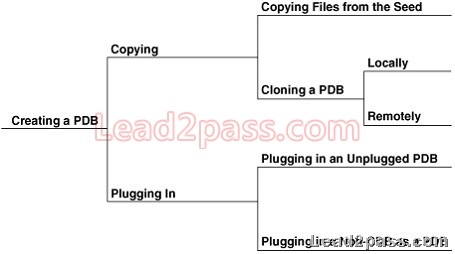

Identify three valid methods of opening, pluggable databases (PDBs).

A. ALTER PLUGGABLE DATABASE OPEN ALL ISSUED from the root

B. ALTER PLUGGABLE DATABASE OPEN ALL ISSUED from a PDB

C. ALTER PLUGGABLE DATABASE PDB OPEN issued from the seed

D. ALTER DATABASE PDB OPEN issued from the root

E. ALTER DATABASE OPEN issued from that PDB

F. ALTER PLUGGABLE DATABASE PDB OPEN issued from another PDB

G. ALTER PLUGGABLE DATABASE OPEN issued from that PDB

Answer: AEG

Explanation:

E:You can perform all ALTER PLUGGABLE DATABASE tasks by connecting to a PDB and running the corresponding ALTER DATABASE statement. This functionality is provided to maintain backward compatibility for applications that have been migrated to a CDB environment.

AG:When you issue an ALTER PLUGGABLE DATABASE OPEN statement, READ WRITE is the default unless a PDB being opened belongs to a CDB that is used as a physical standby database, in which case READ ONLY is the default.

You can specify which PDBs to modify in the following ways:

List one or more PDBs.

Specify ALL to modify all of the PDBs.

Specify ALL EXCEPT to modify all of the PDBs, except for the PDBs listed.

QUESTION 74

You administer an online transaction processing (OLTP) system whose database is stored in Automatic Storage Management (ASM) and whose disk group use normal redundancy.

One of the ASM disks goes offline, and is then dropped because it was not brought online before DISK_REPAIR_TIME elapsed.

When the disk is replaced and added back to the disk group, the ensuing rebalance operation is too slow.

Which two recommendations should you make to speed up the rebalance operation if this type of failure happens again?

A. Increase the value of the ASM_POWER_LIMIT parameter.

B. Set the DISK_REPAIR_TIME disk attribute to a lower value.

C. Specify the statement that adds the disk back to the disk group.

D. Increase the number of ASMB processes.

E. Increase the number of DBWR_IO_SLAVES in the ASM instance.

Answer: AD

Explanation:

A:ASM_POWER_LIMIT specifies the maximum power on an Automatic Storage Management instance for disk rebalancing. The higher the limit, the faster rebalancing will complete. Lower values will take longer, but consume fewer processing and I/O resources.

D:

*Normally a separate process is fired up to do that rebalance. This will take a certain amount of time. If you want it to happen faster, fire up more processes. You tell ASM it can add more processes by increasing the rebalance power.

*ASMB

ASM Background Process

Communicates with the ASM instance, managing storage and providing statistics

Incorrect:

Not B: A higher, not a lower, value ofDISK_REPAIR_TIMEwould be helpful here. Not E:If you implement database writer I/O slaves by setting the DBWR_IO_SLAVES parameter, you configure a single (master) DBWR process that has slave processes that are subservient to it. In addition, I/O slaves can be used to “simulate” asynchronous I/O on platforms that do not support asynchronous I/O or implement it inefficiently. Database I/O slaves provide non-blocking, asynchronous requests to simulate asynchronous I/O.

QUESTION 75

You are administering a database and you receive a requirement to apply the following restrictions:

1. A connection must be terminated after four unsuccessful login attempts by user.

2. A user should not be able to create more than four simultaneous sessions.

3. User session must be terminated after 15 minutes of inactivity.

4. Users must be prompted to change their passwords every 15 days.

How would you accomplish these requirements?

A. by granting a secure application role to the users

B. by creating and assigning a profile to the users and setting the REMOTE_OS_AUTHENT parameter

to FALSE

C. By creating and assigning a profile to the users and setting the SEC_MAX_FAILED_LOGIN_ATTEMPTS

parameter to 4

D. By Implementing Fine-Grained Auditing (FGA) and setting the REMOTE_LOGIN_PASSWORD_FILE

parameter to NONE.

E. By implementing the database resource Manager plan and setting the SEC_MAX_FAILED_LOGIN_ATTEMPTS

parameters to 4.

Answer: A

Explanation:

You can design your applications to automatically grant a role to the user who is trying to log in, provided the user meets criteria that you specify. To do so, you create a secure application role, which is a role that is associated with a PL/SQL procedure (or PL/SQL package that contains multiple procedures). The procedure validates the user: if the user fails the validation, then the user cannot log in. If the user passes the validation, then the procedure grants the user a role so that he or she can use the application. The user has this role only as long as he or she is logged in to the application. When the user logs out, the role is revoked.

Incorrect:

Not B:REMOTE_OS_AUTHENT specifies whether remote clients will be authenticated with the value of the OS_AUTHENT_PREFIX parameter.

Not C, not E:SEC_MAX_FAILED_LOGIN_ATTEMPTS specifies the number of authentication attempts that can be made by a client on a connection to the server process. After the specified number of failure attempts, the connection will be automatically dropped by the server process. Not D:REMOTE_LOGIN_PASSWORDFILE specifies whether Oracle checks for a password file.

Values:

shared

One or more databases can use the password file. The password file can contain SYS as well as non-SYS users.

exclusive

The password file can be used by only one database. The password file can contain SYS as well as non-SYS users.

none

Oracle ignores any password file. Therefore, privileged users must be authenticated by the

operating system.

Note:

The REMOTE_OS_AUTHENT parameter is deprecated. It is retained for backward compatibility only.

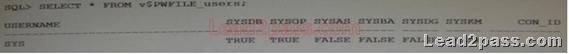

QUESTION 76

A senior DBA asked you to execute the following command to improve performance:

SQL> ALTER TABLE subscribe log STORAGE (BUFFER_POOL recycle);

You checked the data in the SUBSCRIBE_LOG table and found that it is a large table containing one million rows.

What could be a reason for this recommendation?

A. The keep pool is not configured.

B. Automatic Workarea Management is not configured.

C. Automatic Shared Memory Management is not enabled.

D. The data blocks in the SUBSCRIBE_LOG table are rarely accessed.

E. All the queries on the SUBSCRIBE_LOG table are rewritten to a materialized view.

Answer: D

Explanation:

The most of the rows in SUBSCRIBE_LOG table are accessed once a week.

QUESTION 77

Which three tasks can be automatically performed by the Automatic Data Optimization feature of Information lifecycle Management (ILM)?

A. Tracking the most recent read time for a table segment in a user tablespace

B. Tracking the most recent write timefor a table segmentin a usertablespace

C. Tracking insert time by row for table rows

D. Tracking the most recent write time for a table block

E. Tracking the most recent read time for a table segment in the SYSAUX tablespace

F. Tracking the most recent write time for a table segment in the SYSAUX tablespace

Answer: ABC

Explanation:

*You can specify policies for ADO at the row, segment, and tablespace level when creating and altering tables with SQL statements.

* (Not E, Not F)When Heat Map is enabled, all accesses are tracked by the in-memory activity tracking module. Objects in the SYSTEM and SYSAUX tablespaces are not tracked.

*To implement your ILM strategy, you can use Heat Map in Oracle Database to track data access and modification.

Heat Map provides data access tracking at the segment-level and data modification tracking at the segment and row level.

*To implement your ILM strategy, you can use Heat Map in Oracle Database to track data access and modification. You can also use Automatic Data Optimization (ADO) to automate the compression and movement of data between different tiers of storage within the database.

QUESTION 78

Which two partitioned table maintenance operations support asynchronous Global Index Maintenance in Oracle database 12c?

A. ALTER TABLE SPLIT PARTITION

B. ALTER TABLE MERGE PARTITION

C. ALTER TABLE TRUNCATE PARTITION

D. ALTER TABLE ADD PARTITION

E. ALTER TABLE DROP PARTITION

F. ALTER TABLE MOVE PARTITION

Answer: CE

Explanation:

Asynchronous Global Index Maintenance for DROP and TRUNCATE PARTITION This feature enables global index maintenance to be delayed and decoupled from a DROP and TRUNCATE partition without making a global index unusable. Enhancements include faster DROP and TRUNCATE partition operations and the ability to delay index maintenance to off-peak time.

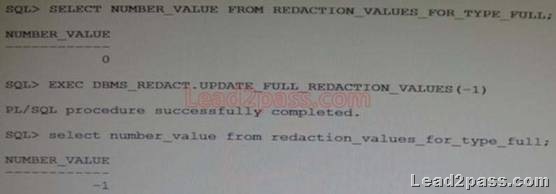

QUESTION 79

You configure your database Instance to support shared server connections.

Which two memory areas that are part of PGA are stored in SGA instead, for shared server connection?

A. User session data

B. Stack space

C. Private SQL area

D. Location of the runtime area for DML and DDL Statements

E. Location of a part of the runtime area for SELECT statements

Answer: AC

Explanation:

A: PGA itself is subdivided. The UGA (User Global Area) contains session state information, including stuff like package-level variables, cursor state, etc. Note that, with shared server, the UGA is in the SGA. It has to be, because shared server means that the session state needs to be accessible to all server processes, as any one of them could be assigned a particular session. However, with dedicated server (which likely what you’re using), the UGA is allocated in the PGA.

C: The Location of a private SQL area depends on the type of connection established for a session. If a session is connected through a dedicated server, private SQL areas are located in the server process’ PGA. However, if a session is connected through a shared server, part of the private SQL area is kept in the SGA.

Note:

*System global area (SGA)

The SGA is a group of shared memory structures, known asSGA components, that contain data and control information for one Oracle Database instance. The SGA is shared by all server and background processes. Examples of data stored in the SGA include cached data blocks and shared SQL areas.

* Program global area (PGA)

A PGA is a memory region that contains data and control information for a server process. It is nonshared memory created by Oracle Database when a server process is started. Access to the PGA is exclusive to the server process. There is one PGA for each server process. Background processes also allocate their own PGAs. The total memory used by all individual PGAs is known as the total instance PGA memory, and the collection of individual PGAs is referred to as the total instance PGA, or just instance PGA. You use database initialization parameters to set the size of the instance PGA, not individual PGAs.

Reference: Oracle Database Concepts 12c

QUESTION 80

Which two statements are true about Oracle Managed Files (OMF)?

A. OMF cannot be used in a database that already has data files created with user-specified directions.

B. The file system directions that are specified by OMF parameters are created automatically.

C. OMF can be used with ASM disk groups, as well as with raw devices, for better file management.

D. OMF automatically creates unique file names for table spaces and control files.

E. OMF may affect the location of the redo log files and archived log files.

Answer: BD

Explanation:

B:Through initialization parameters, you specify the file system directory to be used for a particular type of file. The database then ensures that a unique file, an Oracle-managed file, is created and deleted when no longer needed.

D: The database internally uses standard file system interfaces to create and delete files as needed for the following database structures:

Tablespaces

Redo log files

Control files

Archived logs

Block change tracking files

Flashback logs

RMAN backups

Note:

*Using Oracle-managed files simplifies the administration of an Oracle Database. Oracle-managed files eliminate the need for you, the DBA, to directly manage the operating system files that make up an Oracle Database. With Oracle-managed files, you specify file system directories in which the database automatically creates, names, and manages files at the database object level. For example, you need only specify that you want to create a tablespace; you do not need to specify the name and path of the tablespace’s datafile with the DATAFILE clause.

If you want to pass the Oracle 12c 1Z0-060 exam sucessfully, recommend to read latest Oracle 12c 1Z0-060 Dumps full version.

![clip_image001[4] clip_image001[4]](http://examgod.com/l2pimages/Lead2PassLatestFreeOracle1Z0060Dumps5160_9858/clip_image0014_thumb.jpg)

![clip_image001[6] clip_image001[6]](http://examgod.com/l2pimages/Lead2PassLatestFreeOracle1Z0060Dumps5160_9858/clip_image0016_thumb.jpg)

![clip_image002[4] clip_image002[4]](http://examgod.com/l2pimages/Lead2PassLatestFreeOracle1Z0060Dumps4150_97D1/clip_image0024_thumb.jpg)